Week 5: New Considerations: Hardware Peripherals

By Erika Goering,

Filed under: Degree Project, KCAI, Learning

Comments: Comments Off on Week 5: New Considerations: Hardware Peripherals

An idea that I had originally been hesitant to try was introducing theoretical hardware into the project in order to make technology less invasive. By developing something wearable, I’m starting to make the technology less of a burden and a more normal part of the person’s daily life.

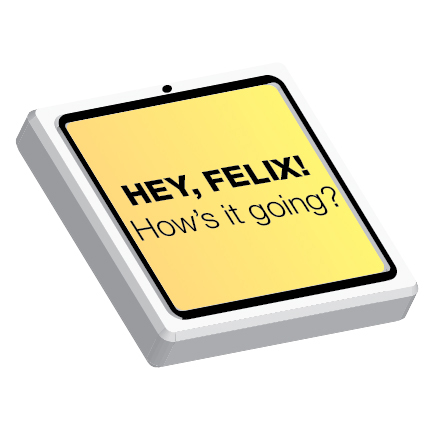

Another issue that was raised was the burden of requiring multiple hearing users to download the phone app to use the “multi-device” mode. So my challenge then became to come up with a way to eliminate the need for multiple devices. So I’m getting rid of “multi-device” mode and replacing it with a kind of broadcast mode, where the Deaf user is the only person with the app and hardware, using the hardware for input and the app would then display the translated text output for everyone to see. (Sketches of this are coming soon!)

I’ve also updated my scenarios:

- One-on-one mode: Alison, who is hard-of-hearing, orders food at a noisy restaurant. The waiter asks her a question, such as, “do you want fries or mashed potatoes?” Because of the app, she can understand and reply to the question with ease!

- Multi-user mode: Felix, who is Deaf, is hanging out with a group of hearing friends at a coffee shop. They have a rather energetic, quick-paced chat about school, and they can all understand each other without the need for lip-reading or slowing down (they’re all snacking and drinking coffee anyway, which makes lip-reading more difficult by nature).

- Movie mode: An as-of-yet-unnamed user goes to a movie theater without open captions. She is able to skip the awkward process of signing out a pair of closed-captioned glasses at the front desk, and instead queues up the movie’s subtitles on her phone. When the movie starts, she hits the “sync” button and places the phone in her cup holder for easy viewing.

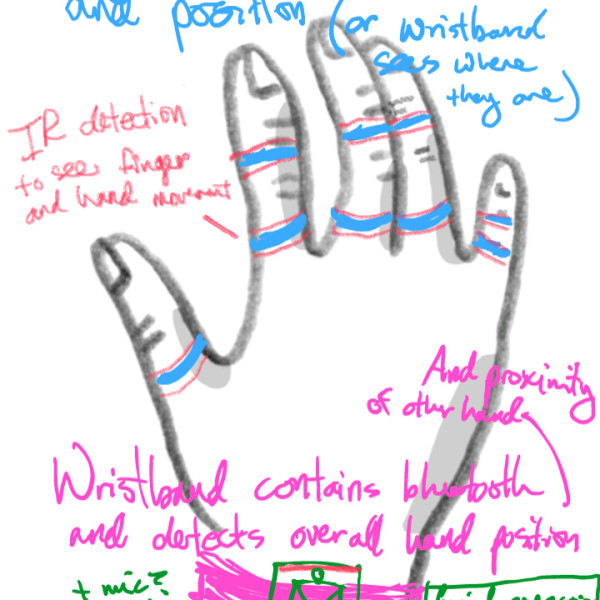

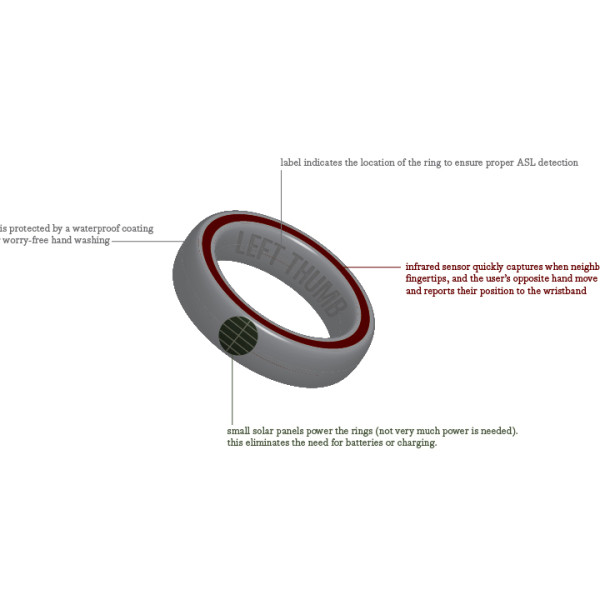

- A set of rings detect and report finger movement and proximity to each other and the main wristband sensor. The ASL movements are sent to the user’s phone and translated into English text.

- The rings have an infrared sensor to detect finger movement and report back to the wristband.

- The wristband’s display unit receives finger and hand movement data and sends it to the user’s phone for translation.

- The wristband’s display transcribes speech into text for the Deaf user.

- The wristband unit includes a camera for detecting facial expression.

Next step: branding and visual exploration. I kind of put that stuff on hold so I could focus on exploring the hardware a bit more. It’s time to get back on track!