Problem, Solutions, and Next Steps

By Erika Goering,

Filed under: Degree Project, KCAI, Learning

Comments: Comments Off on Problem, Solutions, and Next Steps

Problem: There is a language barrier between spoken English and American Sign Language (ASL). We need a conversational solution for when the use of interpreters, writing, and texting aren’t available, effective, or efficient enough for the situation.

Possible solutions:

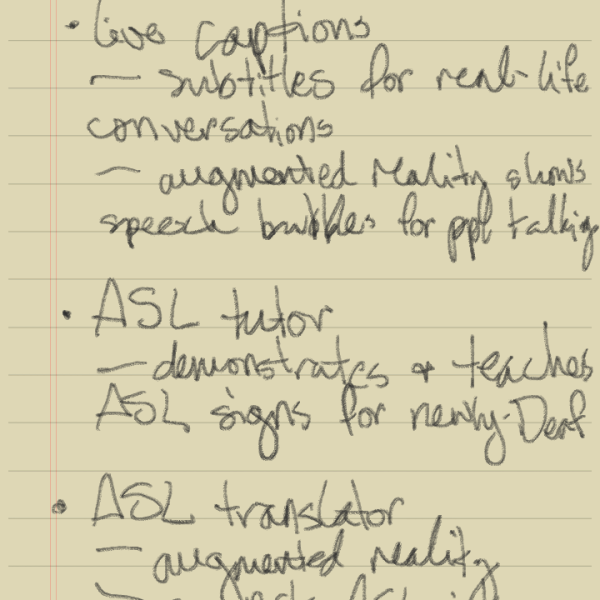

- A mobile app that, on the Deaf side, uses a set of nouns, verbs, emotions, and gestures that can be assembled into sentences quickly by the Deaf user. It would output through text on the device’s screen or text-to-speech software through the device’s speakers to “translate” to the hearing recipient. On the hearing/speaking side, it would have “live captions”, turning spoken language into text, and inflections and mood into a UI change or icons (or some other way of depicting emotional changes, possibly through typography) on the screen.

- A kind of messenger app that strictly uses an icon-based system, without relying on text/verbal language. This would be a kind of universal language between users, eliminating the need for translation, instead of facilitating it. Like texting, but with a non-verbal vocabulary (because ASL and English aren’t directly translatable).

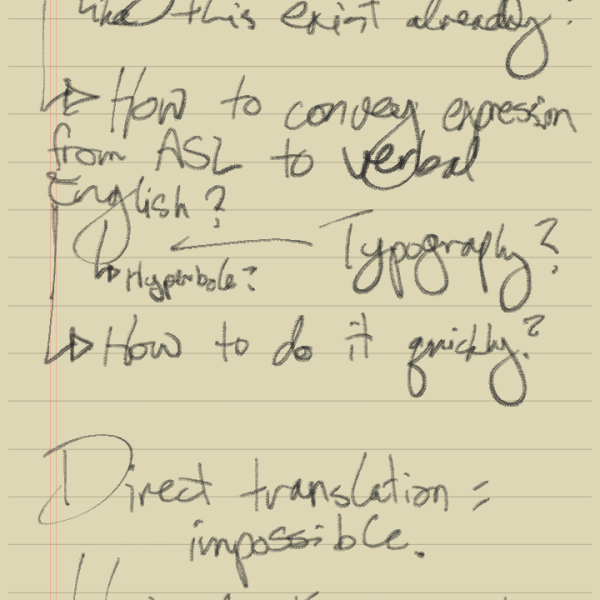

- thoughts about translation

- what the tool could be/do

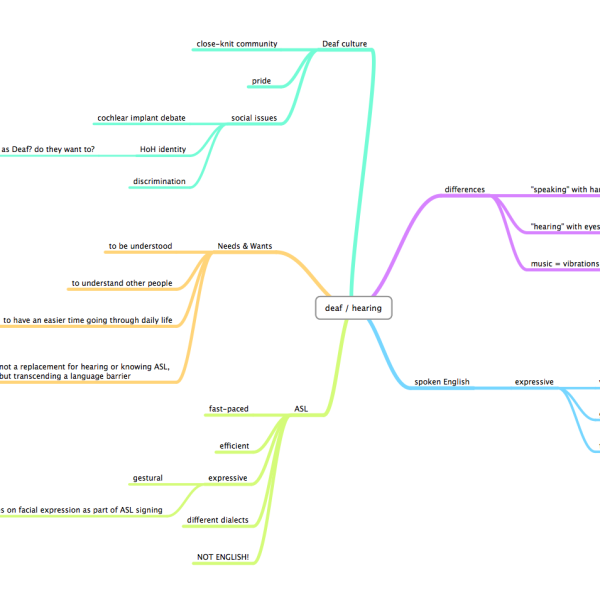

- mind map

Perhaps the most exciting thing I’ve discovered so far is that nothing quite like what I have in mind exists at the moment (a two-way tool specifically designed to replace or supplement interpreters, lip-reading, writing things down, or even translation itself). In addition to overcoming language barriers between ASL and spoken English, I also want to find a way to maintain nonverbal elements of communication, like emotion/expression, subtext, inflection, etc. These are very important elements of both spoken English and ASL, but they are used/manifested in very different ways that may not translate as easily as basic verbal language does. My ultimate goal is to lose as little as possible in the translation between (or replacement of) these two methods of communication and to make communication easier.

Next Steps:

- Verbal and visual audit. I’ve already done a little bit of this, but I need to organize what I’ve already got and explore a bit more as well. I’ll be talking with some interpreters and members of the Deaf community soon.

- Communication model. This will show exactly where and how communication between hearing and non-hearing people breaks down and give insight into how it can be remedied.

- Process timeline. I need to plot out a manageable way of making all this stuff happen.